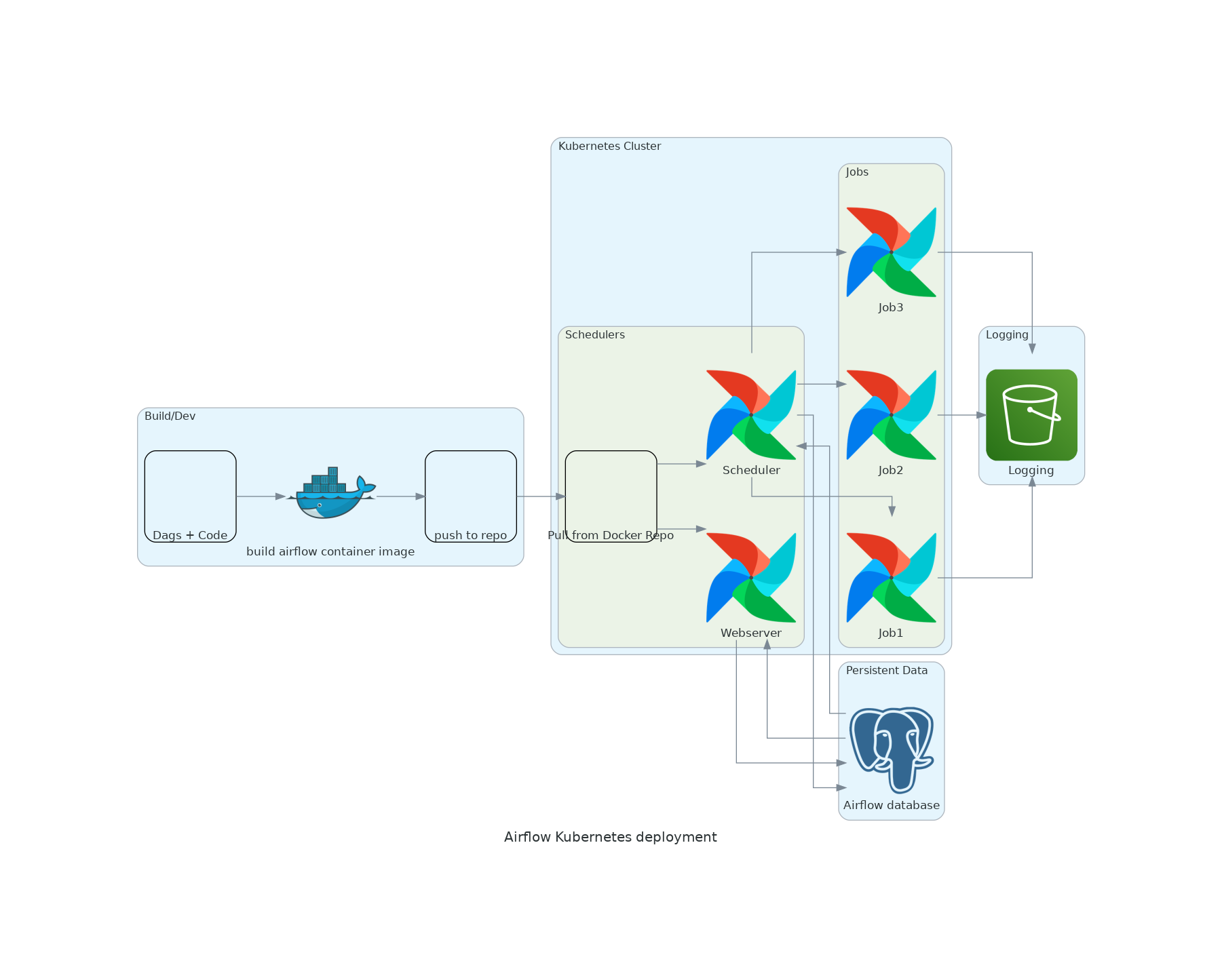

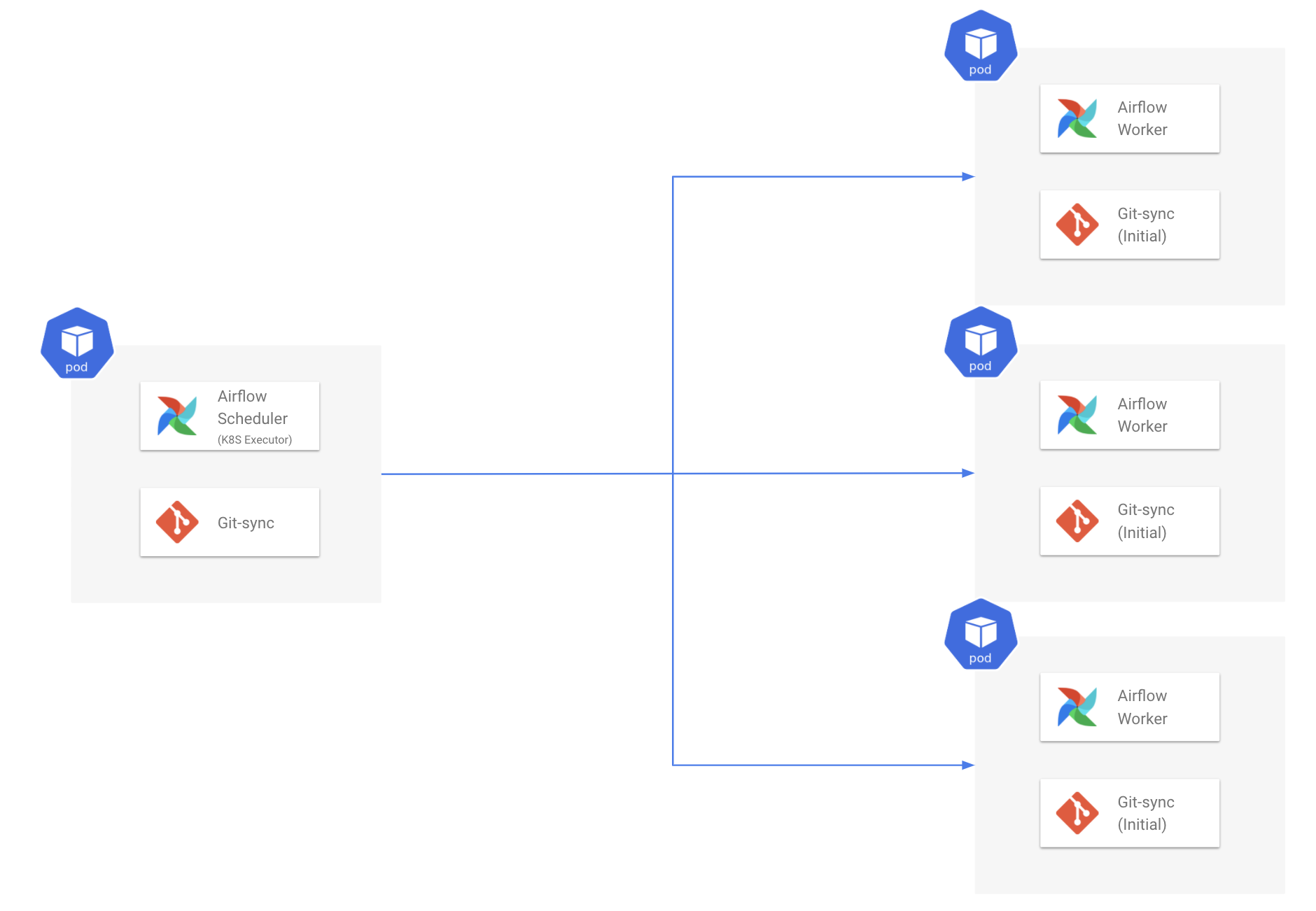

Namespace=self.namespace, image=self.image, name=self. Self.volume = Volume(name='test', configs=volume_config) The KubernetesPodOperator allows you to create Pods on Kubernetes. '''Example DAG using KubernetesPodOperator.''' import datetime from airflow import models from import Secret from .operators.kubernetespod import ( KubernetesPodOperator, ) from kubernetes. Pod Mutation Hook The Airflow local settings file ( airflowlocalsettings. import datetimeįrom _pod_operator import KubernetesPodOperatorįrom import Volumeįrom _mount import VolumeMount CNCF is the open source, vendor-neutral hub of cloud native computing, hosting projects like Kubernetes and Prometheus to make cloud native universal and. The KubernetesPodOperator allows you to create Pods on Kubernetes. For this to work, you need to setup a Celery backend (RabbitMQ, Redis, ) and change your airflow.cfg to point the executor parameter to CeleryExecutor and provide the related Celery settings. Using the KubernetesExecutor The KubernetesExecutor natively runs any task in your DAG as a pod on Kubernetes.

Kubernetes operator is one of the tools designed to push automation past its. CeleryExecutor is one of the ways you can scale out the number of workers. The KubernetesPodOperator enables you to run containerized workloads from within your DAG’s. Dataproc automation helps you create clusters quickly, manage them easily, and save money by turning clusters off when you don’t.

I tried with the code below, and the volumn seems not mounted successfully. With the help of Kubernetes, we provide Airflow with the ability to scale. This tutorial builds on the regular Airflow Tutorial and focuses specifically on writing data pipelines using the TaskFlow API paradigm which is introduced as part of Airflow 2.0 and contrasts this with DAGs written using the traditional paradigm. Dataproc is a managed Apache Spark and Apache Hadoop service that lets you take advantage of open source data tools for batch processing, querying, streaming and machine learning. I am running this on the cluster.I am trying to using the kubernetes pod operator in airflow, and there is a directory that I wish to share with kubernetes pod on my airflow worker, is there is a way to mount airflow worker's directory to kubernetes pod? DAG example using KubernetesPodOperator, the idea is run a Docker container in Kubernetes from Airflow every 30 minutes. Some popular operators from core include: BashOperator - executes a bash command PythonOperator - calls an arbitrary Python function EmailOperator - sends an email Use the task decorator to execute an arbitrary Python function. I cannot access the logs, if the tasks are killed before completion logs are never pushed. Airflow has a very extensive set of operators available, with some built-in to the core or pre-installed providers. I cannot get airflow pod operator hello world example to run.įrom airflow import DAG from datetime import datetime, timedelta from _pod_operator import KubernetesPodOperator from airflow import configuration as conf from import DummyOperator from _operator import PythonOperator from import days_ago default_args =, name="airflow-test-pod", task_id="task-one", in_cluster=in_cluster, # if set to true, will look in the cluster, if false, looks for file # cluster_context='docker-desktop', # is ignored when in_cluster is set to True config_file=config_file, is_delete_operator_pod=True, get_logs=True) 1 Answer Sorted by: 0 I want to to understand if the error is because of out of memory event: For the reason behind failed task instances, check the Airflow web interface > DAG's Graph View About Kubernetes Operator retries option, here 's an example, but you should first understand the reason behind failed tasks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed